There are a multitude of definitions and use cases for a digital twin in pharma and biotech manufacturing. In the simplest terms, a digital twin provides an in silico model of the physical asset or process. Digital twins are employed in product and process development to facilitate agile development, technical transfer, and process improvement while reducing waste, positively impacting quality, and bringing a product to market faster.

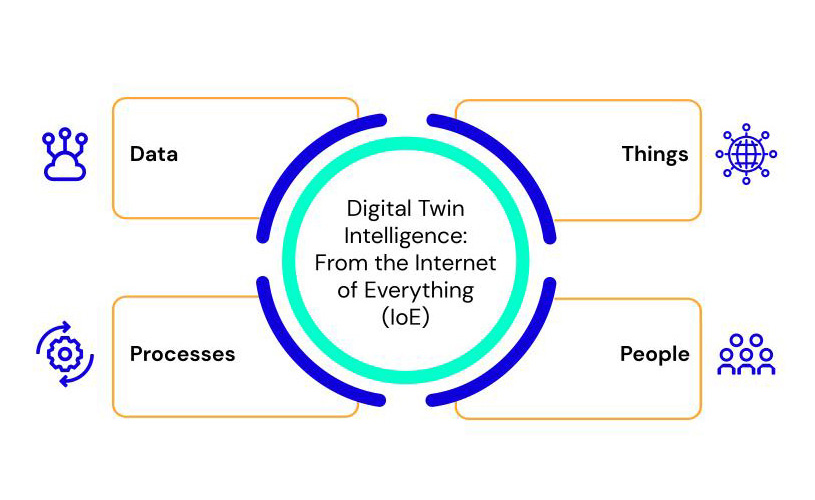

To make this concept more tangible, we can imagine a “smart” automobile, such as a Tesla, for example. While the car itself is continuously producing and transmitting data around energy consumption, performance, maintenance, and more, it is also capable of capturing the environmental and passenger context—driver behavior, road and weather conditions, driver preferences, etc. The car and the context are inextricably linked and the insights coupled from the outside in and inside out enable better management and, ultimately, a better driving experience. This holistic capture of data, processes, things, and people (the so-called ‘Internet of Everything’) can be used to create a digital model that predicts and recommends outcomes based on changes within the car or context. We can think of this as a digital twin.

A digital twin for a biopharma process works in the same way. Take for example a bioreactor unit that is either standalone, part of a multi-unit process train, in process development, or active in commercial production. To create a digital twin with the goal of accelerating process development and optimization, all data from the equipment itself-- raw materials, utilities, external context, and more, are required for the model to be meaningful. All this information must be integrated in the cloud in order to enable a thorough contextualization and close the essential feedback loop that is required to empower the model with continuous updates to adapt to the new scenarios in real-time.

This ‘feedback loop’ can only happen with powerful Artificial Intelligence (AI) due to the complexity of the data involved and the requirement to generate valuable and actionable insights. The result of this approach is a full and intelligent digital version of your bioreactor that is able to proactively monitor its operation, identify the relevant factors that impact the product quality, process safety, and throughput. And it doesn’t stop there. Because the digital twin knows “everything,” it is able to provide real-time predictions on every meaningful information and the impact cascaded by the changes in the process. The outcome is actionable insights that enable proactive decisions to avoid deviations, reduce cost and material waste, and improve performance and product quality, all while constantly maintaining safety and compliance.

As we are talking about pharma, GxP compliance throughout the lifecycle of the data, the AI models, and the digital twin application is absolutely critical. This generates complexities, especially when the actionable recommendations must be applied in real-time like in continuous manufacturing and Continued Process Verification (CPV).

With that in mind, selecting the appropriate technology and defining the best approach to develop your digital twin is vital for a successful implementation.

Planning for your Digital Twin Strategy - 4 Best Practices

- Ensure GxP-compliant digital compatibility so that you can take action on the insights. GxP compliance does not only pertain to a data lake or other central repository; it is also how your data, models, and applications are governed over their life cycle - ensure that GxP is at the core of the solution in its entirety (connect and we can share more).

- Data acquisition cannot be limited to systems data; it must be holistic to include manual operations, external environment, raw materials, utilities, and every external data that is applicable.

- The best and only way to understand and predict biosystems for transformative value is through the application of multivariate analyses using AI.

- Consider the industrial scale-up of your digital twin strategy– ensure you are future-proofing for Continued Process Verification (CPV) and beyond (we can advise, design and empower your roadmap - just drop us a line).

For more information, register today for this free webinar at Xtalks now available on demand:

Presenting are Dr. Toni Manzano, CSO and Co-Founder, Aizon, and Luiza Mukaeda, Industry Specialist, Aizon.

We hope you will join, and please pass this along to your twins and/or colleagues!

By Luiza Mukaeda, Solutions Engineer & Industry Specialist